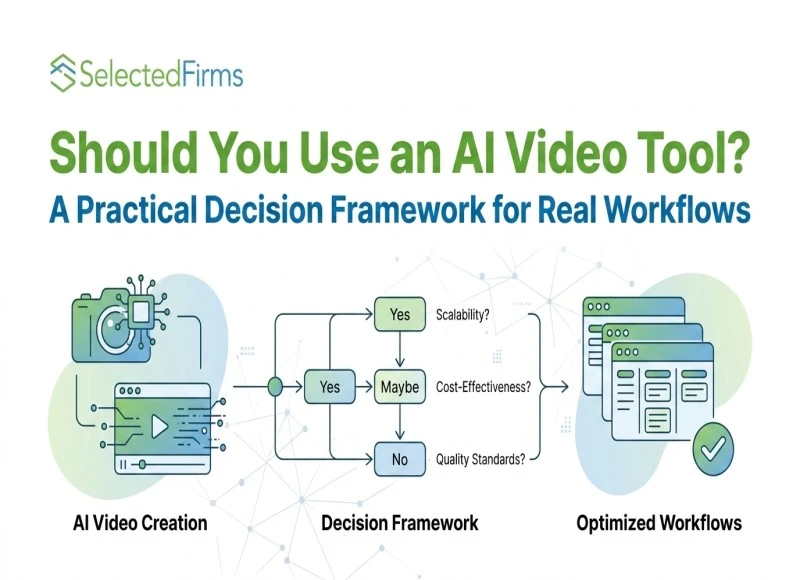

Should You Use an AI Video Tool? A Practical Decision Framework for Real Workflows

-

Last Updated:

10 Apr 2026

-

Read Time:

5 Min Read

-

Written By:

Elia Martell

Elia Martell

-

411

Table of Contents

A clear, practical guide to evaluating AI video tools. Understand how iteration, control, and workflow alignment affect performance and when these tools can genuinely enhance your content strategy.

Most people approach AI video tools with one question: which one is the best? It's a natural starting point, but it's also the wrong one. The better question is far more specific — and answering it correctly changes everything about how you evaluate and choose.

The wrong question most people ask

Most discussions around AI video tools focus on whether a given option is "good" or "better" than the competition. That question is incomplete.

The more relevant question is whether it fits the way you actually create content. In AI video, capability alone doesn't determine usefulness — compatibility does. A powerful system can still feel unusable if it doesn't align with how you work.

That's why the right way to evaluate any AI video tool is not through features alone, but through

a structured decision framework. And that framework starts with expectations.

Step 1: Define what you expect from an AI video tool

The biggest mismatch begins at the expectation level.

If the expectation is simple — enter a prompt and receive a polished, ready-to-use video — many tools will feel inconsistent. Most are not built to operate as one-click generators.

Instead, the more capable tools are built for guided creation. Outputs improve when inputs are structured: reference images, motion cues, clear directional prompts. This shifts your role from "prompt writer" to "output guide."

If immediate perfection is the goal, the tool will feel inefficient. If progressive improvement is acceptable, the workflow starts to make sense.

For business owners specifically, the expectation gap often comes from comparing AI video to traditional production. The mental model of "hire a videographer, get a finished asset" does not translate. A more accurate mental model is "hire a junior creative who gets sharper with every brief you give them."

The tool learns from your direction over time — but only if your direction is consistent and intentional. Teams that brief it the way they'd brief a human creator tend to get far better results than those who treat it as a vending machine.

Step 2: Evaluate your tolerance for iteration

Strong AI video tools are not designed to eliminate iteration — they're designed to make iteration more meaningful.

Every output is part of a refinement cycle rather than a final result. Small adjustments, repeated attempts, and input changes are not exceptions; they are the process.

This creates a clear divide between users:

- Those who expect finished output in one attempt will see iteration as friction.

- Those who treat iteration as part of the process will see it as progress.

The best tools reward consistency and input quality over time.

Step 3: Decide between speed and control

Most AI video tools prioritize speed. The more sophisticated ones introduce a balance.

They can generate outputs quickly, but their real advantage lies in control — how precisely you can guide the result using structured inputs. This makes them more suitable for workflows where consistency matters more than instant output.

In practice:

- If speed alone is the goal, simpler tools may deliver faster results.

- If controlled, repeatable output is the goal, a more structured tool offers a stronger advantage.

For business teams managing content at scale — product launches, campaign cycles, social calendars — the control advantage compounds. When a tool allows you to maintain visual consistency across dozens of outputs, it reduces the review-and-revision burden on your team significantly.

Speed matters less when you're producing ten assets a week; consistency matters enormously. The question to ask internally is not "how fast does this generate a video?" but "how reliably can we reproduce our brand look across outputs?"

Step 4: Match the tool to your use case

Effectiveness depends heavily on context. AI video tools generally perform well for:

- Short-form content and ad creatives — generating and refining multiple variations quickly- fit naturally into these workflows.

- Visual experimentation — where iteration is expected and imperfect outputs are part of the process.

For high-precision production or complex cinematic scenes, most AI video tools still require significant manual support. They complement traditional tools rather than replace them.

The key insight: these tools are context-dependent, not universally applicable.

Step 5: Assess access before capability

A common mistake is evaluating AI tools based only on their feature list.

In reality, access determines usability.

Many platforms restrict usage through credit systems or limited trials. This changes behavior — users hesitate to experiment, avoid multiple iterations, and never fully integrate the tool into their workflow. Without consistent access, even an advanced model remains underutilized.

Before committing to any tool, ask: Can I use this as many times as my workflow demands, without constantly managing credits or hitting walls?

Step 6: Understand how continuous access changes the workflow

When usage is not restricted, the entire workflow evolves.

Using Seedance 2.0 through Topview removes the friction associated with limited access.

Instead of managing attempts, the focus shifts to improving output.

Iteration becomes natural. Experimentation increases. And over time, the system integrates into the workflow rather than remaining a one-time tool.

Once access is no longer a constraint, the final step is to bring all these decisions together.

With Topview’s Business Annual plan offering 365 days of unlimited access to the Seedance 2.0 AI video model, you’re not restricted by credits or short trials—you can actually use it consistently, which is what makes it practical for real content workflows.

Decision summary: When an AI video tool makes practical sense

The decision isn't based on a single factor. It depends on a combination of conditions:

- Expectations aligned with guided, iterative output

- Willingness to treat iteration as progress, not friction

- Preference for controlled output over instant results

- A use case suited for short-form, experimental, or iterative content

- Access that allows consistent, unrestricted usage

When these conditions are met, almost any capable AI video tool can deliver real value. When they're missing, even the most advanced model will feel inefficient.

Final perspective: a tool defined by how it's used

No AI video tool is a universal solution. Its value is determined by how it fits into your workflow — not just what it can technically generate.

It won't remove effort. It won't eliminate iteration. But it can transform how effort is applied — shifting you from repetitive, isolated attempts toward a genuine refinement process. That's the difference between a tool you test once and one that earns a permanent place in how you work.

Recent Blogs

A Beginner’s Guide to Creating Apps Without the Usual Complexity

-

13 May 2026

-

8 Min

-

175

A Practical Guide to Shipping Platforms for Shopify and WooCommerce Stores

-

13 May 2026

-

8 Min

-

282

How to Build a Loyal Subscriber Base on YouTube: A Complete Guide

-

13 May 2025

-

9 Min

-

142